What is Probability?

| Home | | Advanced Mathematics |Chapter: Biostatistics for the Health Sciences: Basic Probability

Probability is a mathematical construction that determines the likelihood of occurrence of events that are subject to chance.

WHAT IS PROBABILITY?

Probability is a mathematical construction that

determines the likelihood of occurrence of events that are subject to chance.

When we say an event is subject to chance, we mean that the outcome is in doubt

and there are at least two possible outcomes.

Probability has its origins in gambling. Games of

chance provide good examples of what the possible events are. For example, we

may want to know the chance of throwing a sum of 11 with two dice, or the

probability that a ball will land on red in a roulette wheel, or the chance

that the Yankees will win today’s baseball game, or the chance of drawing a

full house in a game of poker.

In the context of health science, we could be

interested in the probability that a sick patient who receives a new medical

treatment will survive for five or more years. Knowing the probability of these

outcomes helps us make decisions, for ex-ample, whether or not the sick patient

should undergo the treatment.

We take some probabilities for granted. Most people

think that the probability that a pregnant woman will have a boy rather than a

girl is 0.50. Possibly, we think this because the world’s population seems to

be very close to 50–50. In fact, vital statistics show that the probability of

giving birth to a boy is 0.514.

Perhaps this is nature’s way to maintain balance,

since girls tend to live longer than boys. So although 51.4% of newborns are

boys, the percentage of 50-year-old males may be in fact less than 50% of the

set of 50-year-old people. Therefore, when one looks at the average sex

distribution over all ages, the ratio actually may be close to 50% even though

over 51% of the children starting out in the world are boys.

Another illustration of probability lies in the

fact that many events in life are un-certain. We do not know whether it will

rain tomorrow or when the next earthquake will hit. Probability is a formal way

to measure the chance of these uncertain events. Based on mathematical axioms

and theorems, probability also involves a mathematical model to describe the mechanism

that produces uncertain or random outcomes.

To each event, our probability model will assign a

number between 0 and 1. The value 0 corresponds to events that cannot happen

and the value 1 to events that are certain.

A probability value between 0 and 1, e.g., 0.6,

assigned to an event has a fre-quency interpretation. When we assign a

probability, usually we are dealing with a one-time occurrence. A probability

often refers to events that may occur in the fu-ture.

Think of the occurrence of an event as the outcome

of an experiment. Assume that we could replicate this experiment as often as we

want. Then, if we claim a probability of 0.6 for the event, we mean that after

conducting this experiment many times we would observe that the fraction of the

times that the event occurred would be close to 60% of the outcomes.

Consequently, in approximately 40% of the experiments the event would not

occur. These frequency notions of probability are important, as they will come

up again when we apply them to statistical inference.

The probability of an event A is determined by first defining the set of all possi-ble

elementary events, associating a probability with each elementary event, and

then summing the probabilities of all the elementary events that imply the occur-rence

of A. The elementary events are

distinct and are called mutually exclusive.

The term “mutually exclusive” means that for

elementary events A1 and A2, if A1 happens then A2

cannot happen and vice versa. This property is necessary to sum probabilities,

as we will see later. Suppose we have event A

such that if A1 occurs, A2 cannot occur, or if A2 occurs, A1 cannot

occur (i.e., A1 and

A2 are mutually

exclu-sive elementary events) and both A1

and A2 imply the

occurrence of A. The proba-bility of A occurring, denoted P(A),

satisfies the equation P(A) = P(A1) + P(A2).

We can make this equation even simpler if all the

elementary events have the same chance of occurring. In that case, we say that

the events are equally likely. If there are k

distinct elementary events and they are equally likely, then each elemen-tary

event has a probability of 1/k.

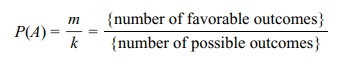

Suppose we denote the number of favorable out-comes as m, which is comprised of m

elementary events. Suppose also that any event A will occur when any of these m

favorable elementary events occur and m

< k. The foregoing statement means

that there are k equally likely,

distinct, elemen-tary events and that m

of them are favorable events.

Thus, the probability that A will occur is defined as the sum of the probabilities that any

one of the m elementary events

associated with A will occur. This

probabil-ity is just m/k. Since m represents the distinct ways that A can occur and k

represents the total possible outcomes, a common description of probability in

this simple model is

P(A) = m/k

= {number of favorable outcomes} /{number of possible outcomes}

Example 1: Tossing a Coin Twice. Assume we have a fair coin (one that favors neither heads nor tails)

and denote H for heads and T for tails. The assumption of fairness

implies that on each trial the probability of heads is P(H) = 1/2 and the

probability of tails is P(T) = 1/2. In addition, we assume that

the trials are statisti-cally independent—meaning that the outcome of one trial

does not depend on the outcome of any other trial. Shortly, we will give a

mathematical definition of statis-tical independence, but for now just think of

it as indicating that the trials do not in-fluence each other.

Our coin toss experiment has four equally likely

elementary outcomes. These out-comes are denoted as ordered pairs, which are {H, H},

{H, T}, {T, H}, and {T, T}. For example, the

pair {H, T} denotes a head on the first trial and a tail on the second.

Because of the independence assumption, all four elementary events have a

proba-bility of 1/4. You will learn how to calculate these probabilities in the

next section.

Suppose we want to know the probability of the

event A = {one head and one tail}. A

occurs if {H, T} or {T, H} occurs. So P(A) = 1/4 + 1/4 = 1/2.

Now, take the event B = {at least one head occurs}. B

can occur if any of the el-ementary events {H,

H}, {H, T} or {T, H}

occurs. So P(B) = 1/4 + 1/4 + 1/4 = 3/4.

Example 2: Role Two Dice one

Time. We assume that the two dice are indepen-dent of one

another. Sum the two faces; we are interested in the faces that add up to

either 7, 11, or 2. Determine the probability of rolling a sum of either 7, 11,

or 2.

For each die there are 6 faces numbered with 1 to 6

dots. Each face is assumed to have an equal 1/6 chance of landing up. In this

case, there are 36 equally likely ele-mentary outcomes for a pair of dice.

These elementary outcomes are denoted by pairs, such as {3, 5}, which denotes a

roll of 3 on one die and 5 on the other. The 36 elementary outcomes are

{1, 1}, {1, 2}, {1, 3}, {1, 4}, {1, 5}, {1, 6}, {2,

1}, {2, 2}, {2, 3}, {2, 4}, {2, 5}, {2, 6}, {3, 1}, {3, 2}, {3, 3}, {3, 4}, {3,

5}, {3, 6}, {4, 1}, {4, 2}, {4, 3}, {4, 4}, {4, 5}, {4, 6}, {5, 1}, {5, 2}, {5,

3}, {5, 4}, {5, 5}, {5, 6}, {6, 1}, {6, 2}, {6, 3}, {6, 4}, {6, 5}, and {6, 6}.

Let A

denote a sum of 7, B a sum of 11, and

C a sum of 2. All we have to do is

iden-tify and count all the elementary outcomes that lead to 7, 11, and 2.

Dividing each sum by 36 then gives us the answers:

Seven occurs if we have {1, 6}, {2, 5}, {3, 4}, {4,

3}, {5, 2}, or {6, 1}. That is, the probability of 7 is 6/36 = 1/6 0.167.

Eleven occurs only if we have {5, 6} or {6, 5}. So the probability of 11 is

2/36 = 1/18 0.056. For 2 (also called snake eyes), we must roll {1, 1}. So a 2

occurs only with probability 1/36 ≈ 0.028.

The next three sections will provide the formal

rules for these probability calculations in general situations.

Related Topics